|

Download Microsoft. The Report Part Gallery is also included in this release - taking self- service reporting to new heights by enabling users to re- use existing report parts as building blocks for creating new reports in a matter of minutes with a “grab and go” experience. Additionally, users will experience significant performance improvements with enhancements to the ability to use Report Builder in server mode. This allows for much faster report processing with caching of datasets on the report server when toggling between design and preview modes. Users can transform mass quantities of data with incredible speed into meaningful information to get the answers they need within seconds. This version also includes Report Builder server mode, and the ability to launch Report Builder directly from a Report Server. The download provides a Report Viewer Web part, Web application pages, and. Windows Share. Point Services 3. Microsoft Office Share. Point Services 2. For more information, please see Administering Servers by Using Policy Based Management in SQL Server 2. R2 Books Online. Note: This component also requires Windows Installer 4. Audience(s): Customer, Partner, Developer. Package (SQLServer. Best. Practices. Policies. Microsoft. Using Microsoft Sync Framework, developers can build applications that synchronize data from any source using any protocol over any network. The download contains the files for installing SQL Server Compact 3. SP 2 on Windows desktop. The download contains the files for installing SQL Server Compact 3. SP 2 on Windows desktop. The download contains the files for installing SQL Server Compact 3. SP 2 on Windows desktop. The download contains the files for installing SQL Server. Compact 3. 5 SP 2 on Windows desktop. The SQL Server JDBC Driver 3. Resolving this issue as this looks like a connection problem and there has been no feedback in the past three months. If this is still an issue, please reopen this bug. Failed to retrieve data for this request.

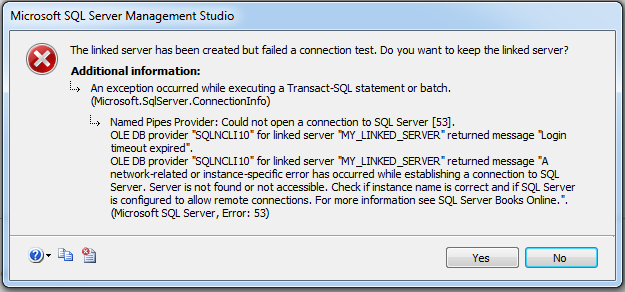

SQL Server users at no additional charge, and provides access to SQL Server 2. R2 , SQL Server 2. SQL Server 2. 00. SQL Server 2. 00. Java application, application server, or Java- enabled applet. This is a Type 4 JDBC driver that provides database connectivity through the standard JDBC application program interfaces (APIs) available in Java Platform, Enterprise Edition 5. It has been tested against major application servers including IBM Web. Sphere, and SAP Net. Weaver. Audience(s): Partner, Developer. Microsoft. The component is designed to be used with the Enterprise and Developer editions of SQL Server 2. Hello masters, we are facing one issue from last 3 days. During job execution (SSIS) we are getting Login TimeOut. Request your master help. 2 Features of OraOLEDB. This chapter describes components of Oracle Provider for OLE DB (OraOLEDB) and how to use the components to develop OLE DB consumer applications. OLE DB provider 'SQLOLEDB' reported an error. Execution terminated by the provider because a resource limit was reached. The user does not have permission to access the data source. If you are using a SQL Server database, verify that the user has a valid database user login. R2 Integration Services. To install the component, run the platform- specific installer for x. Itanium computers respectively. For more information see the Readme and the installation topic in the Help file. Audience(s): Partner, Developer Microsoft. The component consists of a client- side DLL that is linked into a user application, as well as a set of stored procedures to be installed on SQL Server. Visit the SQL Server 2. Books Online page on the Microsoft Download Center. Audience(s): Developer, DBAMicrosoft. Upgrade Advisor identifies feature and configuration changes that might affect your upgrade, and it provides links to documentation that describes each identified issue and how to resolve it. It contains run- time support for applications using native- code APIs (ODBC, OLE DB and ADO) to connect to Microsoft SQL Server 2. SQL Server Native Client should be used to create new applications or enhance existing applications that need to take advantage of new SQL Server 2. R2 features. This redistributable installer for SQL Server Native Client installs the client components needed during run time to take advantage of new SQL Server 2. R2 features, and optionally installs the header files needed to develop an application that uses the SQL Server Native Client API. Audience(s): Customer, Partner, Developer. Microsoft. MSXML 6. XML 1. 0, XML Schema (XSD) 1. XPath 1. 0, and XSLT 1. In addition, it offers 6. XML data, and improved reliability over previous versions of MSXML. Audience(s): Partner, Developer. Microsoft. SQL Server developers and administrators can use the data provider with Integration Services, Analysis Services, Replication, Reporting Services, and Distributed. Query Processor. Run the self- extracting download package to create an installation folder. The single setup program will install the Version 3. IA6. 4 computers. Read the installation guide and release notes for more information. The bcp utility bulk copies data between an instance of Microsoft SQL Server 2. R2 and a data file in a user- specified format. The bcp utility can be used to import large numbers of new rows into SQL Server tables or to export data out of tables into data files. Note: This component requires both Windows Installer 4. Microsoft SQL Server Native Client (which is another component available from this page). Audience(s): Customer, Partner, Developer Microsoft. By doing this, cpu- intensive or long- duration tasks can be offloaded out of SQL Server to an application executable, possibly in another computer. The application executable can also run under a different Windows account from the Database Engine process. This gives administrators additional control over the resources that the application can access. Run the self- extracting download package to create an installation folder. Read Books Online for more information. The single setup program will install the service on x. IA6. 4 computers. Read the documentation for more information. Audience(s): Customer, Developer. Microsoft. The SQL Server Power. Shell Provider delivers a simple mechanism for navigating SQL Server instances that is similar to file system paths. Power. Shell scripts can then use the SQL Server Management Objects to administer the instances. The SQL Server cmdlets support operations such as executing Transact- SQL scripts or evaluating SQL Server policies. Note: Windows Power. Shell Extensions for SQL Server requires SQL Server 2. R2 Management Objects, also available on this page. This component also requires Windows Power. Shell 1. 0; download instructions are on the Windows Server 2. Web site. Audience(s): Customer, Partner, Developer. Microsoft. This object model will work with SQL Server 2. SQL Server 2. 00. SQL Server 2. 00. SQL Server 2. 00. R2. Note: Microsoft SQL Server 2. R2 Management Objects Collection requires Microsoft Core XML Services (MSXML) 6. Microsoft SQL Server Native Client, and Microsoft SQL Server System CLR Types. ADOMD. NET is a Microsoft ADO. NET provider with enhancements for online analytical processing (OLAP) and data mining. Note: The English ADOMD. NET setup package installs support for all SQL Server 2. R2 languages. Audience(s): Customer, Partner, Developer. Microsoft. This provider implements both the OLE DB specification and the specification’s extensions for online analytical processing (OLAP) and data mining. Note: Microsoft SQL Server 2. R2 Analysis Services OLE DB Provider requires Microsoft Core XML Services (MSXML) 6. Audience(s): Customer, Partner, Developer. Microsoft. The download includes the following components. Table Analysis Tools for Excel: This add- in provides easy- to- use tools that leverage SQL Server 2. Two new tools have. Prediction Calculator and Shopping Basket Analysis. Data Mining Client for Excel: This add- in enables you to go through the full data mining model development lifecycle within Excel 2. SQL Server 2. 00. Analysis Services instance. This release adds support for new SQL Server 2. Document Model wizard, and. Data Mining Templates for Visio: This add- in enables you to render and share your mining models as annotatable Visio 2. The controls in this library display the patterns that are contained in Analysis Services mining models. The SQLSRV extension provides a procedural interface while the PDO. The drivers work with all editions of SQL Server 2. SQL Azure, and rely on the Microsoft SQL Server Native Client to communicate with SQL Server. The SQL Server Native Client can be downloaded on this Feature Pack page. Audience(s): Developer. SQL Server Integration Services . This new DBA would likely spend the entire first week getting to know the lay of the land—using SQL Server Management Studio (SSMS) to connect to each SQL Server machine, one by one, to gather essential information such as version, edition, server configuration, existing databases, and scheduled backup jobs. A daunting task indeed. I learned early in my career that spending time up front to automate otherwise manual and time- consuming tasks can preserve your sanity. I therefore developed a fairly simple solution that connects to each available SQL Server machine, pulls information into a central repository database, and feeds the combined data to a report for DBAs and other IT staff to use. In this article I describe the solution I used. For example, you can use the Microsoft SQL Server Health and History Tool to populate a repository database. However, this tool is outdated and not very flexible. In a later article I'll explain how to build and deploy the three SSRS reports designed to deliver the data from this repository. If you stringently adhere to best practices and use only supported techniques, you'll need to withhold your judgment temporarily—until you see that the nonstandard methods I use are efficient and aren't detrimental.) Create the Repository Database. Now that you have a list of servers to use as input, you might wonder how you can use that input directly in an SSIS package. But don't get ahead of yourself—first, you must store the information somewhere. As most DBAs know, the best place to store a list of data is in a table. Before we examine the SSIS package, let's take a look at the database that will be the repository for the SSIS load. The table that will store the list of servers on the network from the Sqlcmd command is called Server. List. In addition to this table, six other base tables in the DBA. These tables are SQL. Each of these six tables holds specific information about each SQL Server instance. Web Listing 1 contains each table's schema; this listing also serves as the script to build the database for the SSIS package to populate the database. To run this script successfully, you need to create a blank database called DBA. After you create the DBA. Although I didn't automate this process, you can use SSIS techniques similar to the following techniques that I discuss to easily do so. I used the special stored procedure xp. Assuming that you've run the script to create the DBA. In SSMS, open a new query window and enter the following command: USE DBA. First, the result set returns NULL records. The table can accommodate NULL records, and the SSIS package's logic will in turn filter out these rows. You could build in logic to take care of the NULL values before the insertion, but I chose to do it as part of the SSIS package. Because this table has no defined indexes that require unique values, truncating the table also ensures that no duplicate rows occur each time the Sqlcmd /Lc command loads the table. You also need to ensure that xp. By default, xp. Fortunately, SQL Server 2. My solution queries each server and stores this information in the SQL. The Databases table simply holds the name of the server and the name of the database. You can use the Server field to join the Database. Although this solution wasn't ideal, it was sufficient for my purposes.)The final three tables store information about SQL Server Agent jobs and database backups, which I believe is the most important information for any DBA to have. For example, knowing which jobs are failing and need attention is imperative when you're working with hundreds or even tens of database servers and databases. Jobs often fail—and because most jobs perform routine full and transaction log backups if they fail, response must be swift. I've found that 5 is a good number of days of history to analyze. SSIS might seem like a complex design environment if you've never used it. Many DBAs use DTS for SQL Server 2. ETL). Figure 1 shows the full package that I used to populate the repository database. This package consists of three areas: (1) migrating and/or truncating repository tables to maintain the repository, (2) populating a variable with an ADO record set of server names derived from the commandline utility Sqlcmd, and (3) using this variable to programmatically connect to each server, one by one, and pull information into the repository. Truncating tables and migrating data to archive tables occur first in the SSIS package. The Execute SQL Task objects that run the Truncate Table statements are grouped together in a sequence container at the top of the package. When the package runs, all the tables are initially truncated; the only exception is the Job stable. Before truncating the Jobs table, a Data Flow task moves the data from the Jobs table to the Jobs. I wanted to maintain a history of job successes and failures to analyze over time. The other tables need the most current data—and in my opinion, starting fresh each time for this semi- static information is cleaner. As Mentioned previously, I'm pulling 5 days' worth of backup history that will repopulate with each run. Figure 2 shows the dialog box to configure the Execute SQL Task object to truncate the SQL. When all the objects in the Truncate Tables and Populate Archives sequence container complete successfully, the package moves to the second phase, which is to populate a variable with an ADO record set. Before I explain how to populate a variable from a SQL Server query to use within an SSIS package, let me explain why you might want to do so. If you have fewer than 1. In SSIS you'd have 1. Connection Managers, each pointing to one SQL Server machine. More than 1. 0 servers is problematic, but a tenacious DBA might be willing to create separate connections for, say, 2. DBA is willing to manually add servers and maintain the package indefinitely. In my case, I had more than 1. I needed a better solution. The task to populate the variable uses a simple SELECT statement to query the Server. List. The query is: SELECT RTRIM(Server) AS < servername> FROM Server. List. A direct input query to the DBA. To use the query results to populate a variable, you need to have a variable already set up. For my solution, I needed to set up two variables: one for the Execute SQL Task object, and one for the final third of the package, which uses Foreach Loop container objects. The five Foreach Loop containers (i. DBA. Figure 4 shows the Variables toolbar with two defined variables of two different data types. The first, SRV. The second, SQL. These distinctions are important. Because the result set from the SELECT statement contains multiple records, a string variable doesn't work. I needed to use the SQL. Object to hold the results, then map the two variables, object to string, in the Foreach Loop container. I used the following four simple steps to accomplish this task. When the Foreach Loop container executes the Data Flow tasks it contains, the connection string is dynamically built with each enumeration of servername. In this package, the Connection Manager called Multi. Server serves this purpose. Setting the variable to the Server. Name property, as Figure 8 shows, causes the connections to set themselves correctly for each server. After the variable mappings are in place, you can use Data Flow objects within each Foreach Loop container to load the tables. You can place Data Flow objects on the SSIS package's Control Flow tab, but these special objects have their own tab on which you can define their properties and sequencing. In general, a Data Flow task consists of a source and a destination object. In my solution, both the source and destination are OLE DB connections to a SQL Server machine. I configured the source to use the Multi. Server connection that would enumerate through the list of servers, and I configured the local DBA. Source and destination columns are mapped together. The source can be an object, such as a table or view, or as in my package, it can be a SQL query to be used as a derived table. For four of the five tables, I sent one query to select values and loaded the results from the remote servers into the local DBA. To examine the tables' source queries, right- click the Data Flow object within the Foreach Loop container and select Edit. Then, on the Data Flow table, right- click the OLE Data Source object and select Edit again to display the source query. Web Listing 2 contains the query to load the Databases table. I needed to handle the Database. Because the SQL Server 2. Master database stores basic information about each database in the sysdatabases tables, and each database stores the remaining important information, I needed to query each database individually. I could have used the stored procedure sp. However, using this stored procedure doesn't return a solitary result set. I needed a full result set, so I considered other alternatives. Using temp tables or table variables would have given me the full result set I needed, but setting up and maintaining temp tables is difficult and requires special considerations. For example, you need to create the temp table beforehand, and you must set a value to retain the connection. My solution was to query each database to return database- specific information in a table that I created in the Temp. DB database. The table I created wasn't a true temporary table with a # or ## prefix. Although the table resides in the Temp. DB database, its size and location have minimal effect on the source server. Web Listing 3 contains the code to create and populate this table, called Hold. For. Each. DB. Notice the syntax of the sp. This command is fairly useful, without requiring you to wrap logic into cursors to provide similar functionality. If no errors generate, the package will run in less than 2 seconds for 2 servers and in less than 2 minutes for 3.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. Archives

August 2017

Categories |

RSS Feed

RSS Feed